We spend a lot of time in Google Analytics, going through the numbers and trying to figure out how and why they change. Why did we receive more traffic from social media this month? Why did fewer people find us on Google last month? Why did people spend less time on our website as of late?

These questions allow us to dig deeper and find out what marketing efforts are working and what needs to be adjusted.

Understanding your website traffic can be a complicated process. Tools like Google Analytics make it easy to track your traffic, but interpreting the numbers isn’t always so straightforward, especially when certain factors can skew your metrics.

The biggest traffic skewing culprit: bot traffic.

Read on to find out all you need to know about bots and how they affect your site.

What are Bots?

Think of website bots like an automated robot that can operate without human intervention. Except instead of a physical robot, these are software robots built to perform specific tasks on the internet that are usually repetitive and difficult for humans to perform at great speed.

Bots can be official and legitimate (read: good) or bad, unofficial and spam-like (read: malicious).

Good Bots:

Good bots help to make the internet a better place for everyone.

- Spider bots are used by search engines like Google to “crawl” your website and analyze its content so Google knows when to list your website as a potential answer to a user search.

- Trader bots search through online auction sites like Amazon to find the best deals. They’re used by retailers to beat out the competition on price.

- Media and data bots provide real-time updates on things like weather and news, helping to provide the most up-to-date information for the countless apps we rely on.

- Copyright bots search for material that’s been copied or plagiarized.

Bad Bots:

Unfortunately bad bots come in even more flavors than good bots, and the most recent study on bot traffic across the web shows there are more bad bots than good bots. This isn’t a comprehensive list, but the more common types of malicious website bots include:

- Spam and email bots that spread spam content and collect personal information like phone numbers and email address when submitted by users on websites.

- Website scraper bots steal content from a site and reprint it on numerous other places across the web.

- Click bots are ad fraud bots that intentionally engage in your advertising, skewing your data and costing you money.

What is bot traffic?

Bot traffic is website traffic that is artificially generated from online bots. Typically any bot traffic on your website is going to be “bad” bot traffic, as it skews your website metrics making it look like you have a lot more visitors.

Distinguishing bot traffic from human traffic isn’t impossible, however it’s often difficult to account for all the bots scouring your website. But it’s important to consider them when viewing metrics from your website, because a large chunk of your traffic isn’t coming from real people.

How Much of Your Website Traffic is Bots?

A study which looked at 17 billion website visits across 100,000 domains found that bots comprise 52% of all website traffic. About 29% of this is comprised of bad bots with the other 23% being good bots.

This doesn’t mean that 50% of your website traffic is bots. The number can vary from one domain to the next, but it’s safe to assume that a healthy proportion of your traffic isn’t real.

And if you think that your level of bot traffic will be lower because your site doesn’t generate a ton of real traffic, that’s not necessarily the case. In fact, websites that are less popular among humans tend to attract more bots. If you aren’t already filtering out bot traffic from your website analytics, it could account for upwards of 20-30% of your website traffic.

How to Identify Bot Traffic

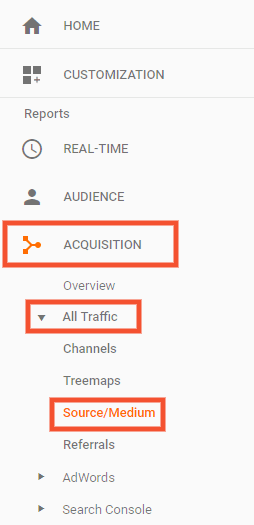

There are a few clear indicators that a specific source of traffic is an online bot. First off, most of your bot traffic will fall under one of two sources: referral or direct. Within Google Analytics go to Acquisition → All Traffic → Source/Medium.

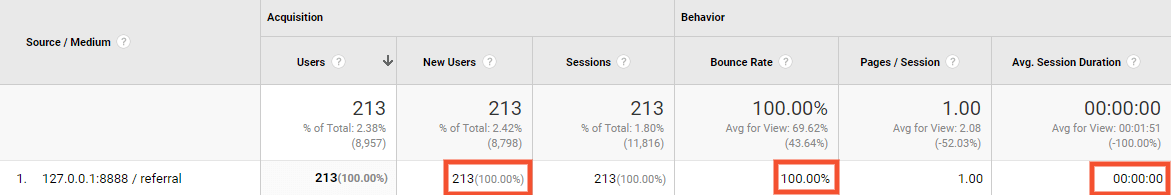

You can tell your referral traffic was generated by bots if:

- It has a new session percentage of 100%

- It has a bounce rate of 100%

- It has an average session duration of 0 seconds

It’s unlikely that any legitimate source of traffic would have numbers like this, so seeing all three is a clear indicator that you’re looking at website bots.

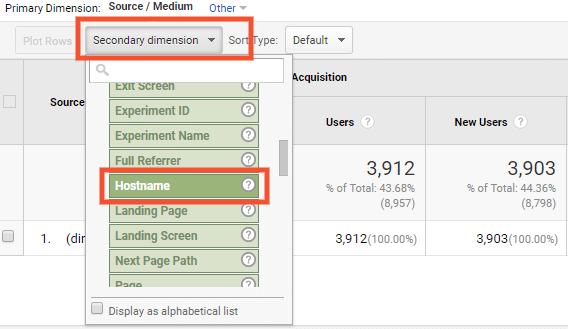

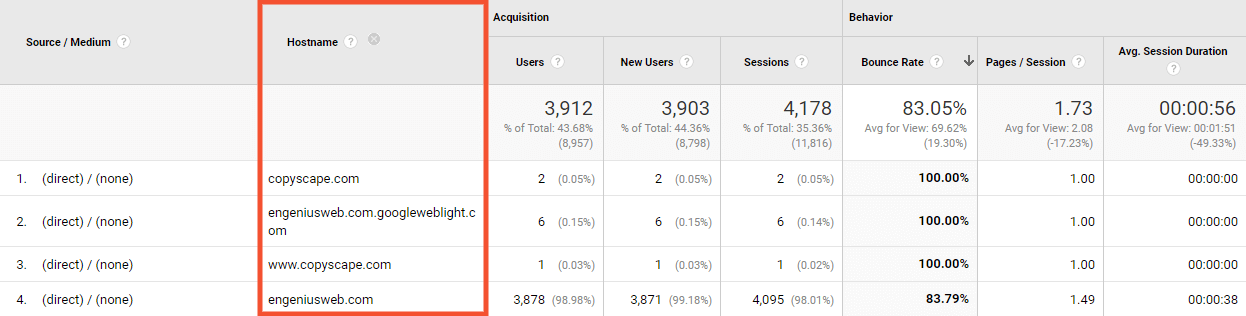

For direct traffic generated by bots you’ll see the same numbers as above, but you need to take one extra step. When looking at direct traffic within Google Analytics, choose the secondary dimension “Hostname”.

If the traffic is real the hostname will be the URL of your website or a subdomain; if it’s anything else it’s safe to assume you’re looking at bot traffic.

How Bots Affect Your Website Metrics

Malicious bots are dangerous because they’re geared towards stealing information or committing fraud, but they also skew your website numbers dramatically. This is especially frustrating if you use numbers like overall sessions and conversion rates to gauge the success of your website and other marketing efforts.

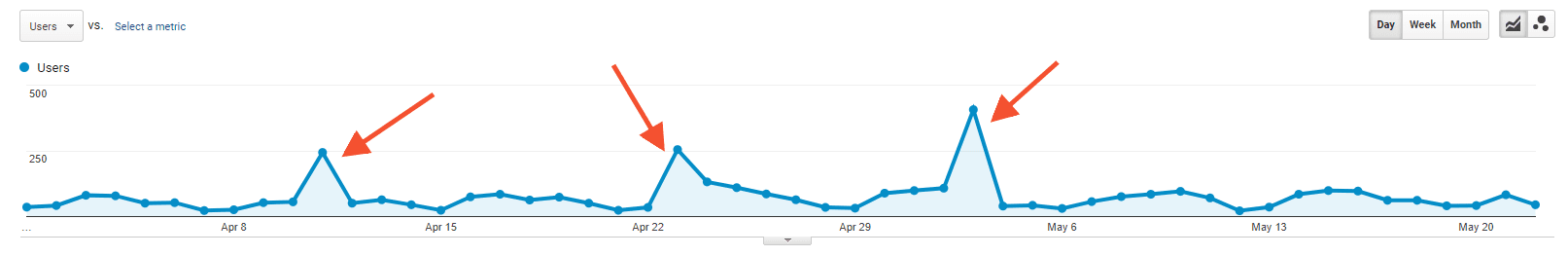

Bots cause huge spikes in traffic, so if you don’t know to look out for them you may assume you drove a bunch of valuable traffic to your website because of an ad you ran or special you promoted, when in fact that traffic isn’t real.

And it’s more than just overall traffic numbers. Because bot traffic has high bounce rates and low session durations — which are good indicators of whether or not your website is engaging and contains the content your audience is looking for — it may make you think that your website needs to be changed in some way, or that the messaging in your marketing materials is inaccurate or misleading. This could lead to you making unnecessary changes, wasting time and money.

How to Clean Up Your Analytics

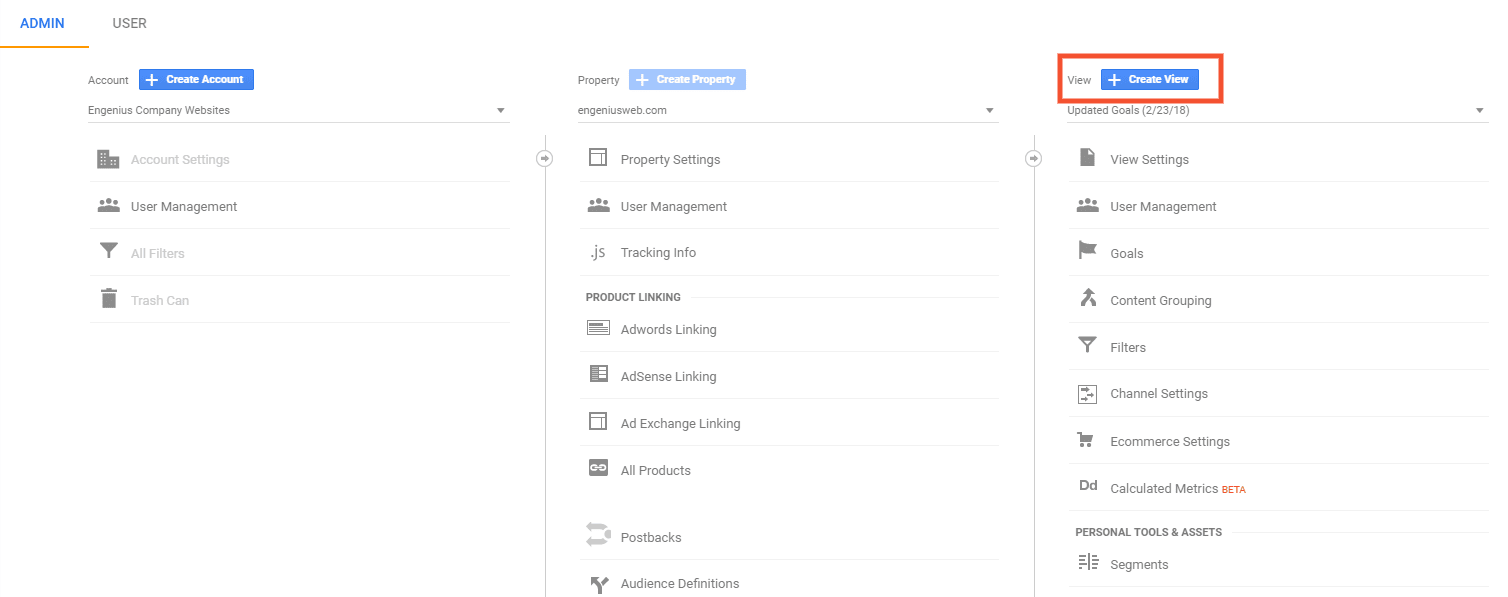

There are a number of steps to ensure the numbers you look at in Google Analytics are legitimate. The first step is to create multiple Views within your Analytics account. Google even recommends this — they suggest to have an unfiltered View that includes all the traffic data from your site, including bots, as well as a filtered View which excludes traffic from bots, among other things.

Creating a new View is easy, but keep in mind that new Views don’t include historical Analytics data. That’s why it’s ideal to set them up when first connecting Analytics with your site.

To create a View:

- Go to the Admin panel of Analytics and look at the third column. You’ll see the option to “Create View.”

- Choose what data you want to track, assign the View a name, and you’re done.

After you’ve created two Views within Analytics, it’s time to set up the proper filters.

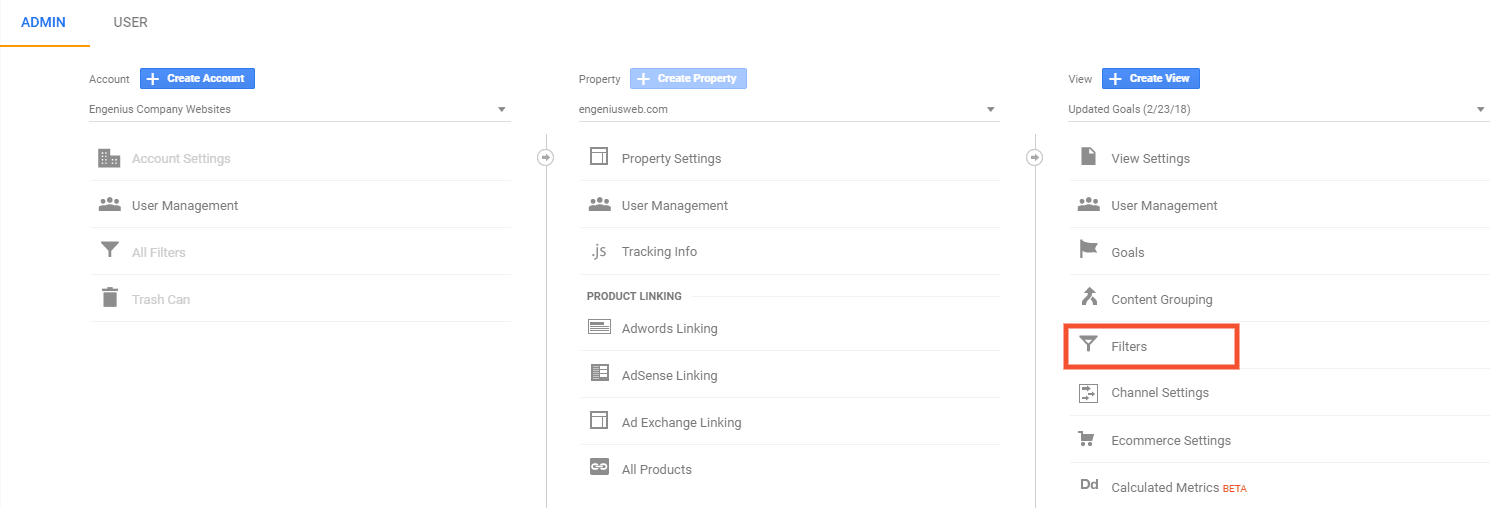

Filter Bot Traffic for Referral Sources:

- Navigate to the Admin panel of Analytics,

- Go to the view column and click Filters. There you can add an appropriate filter for every source of bot traffic on your site.

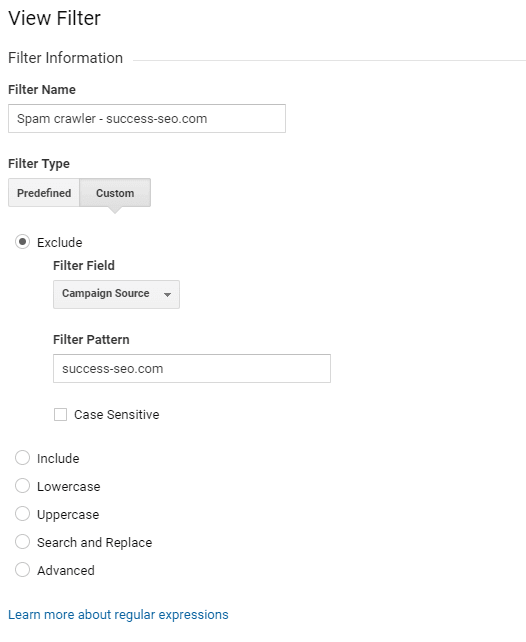

TIP: Start by giving the filter a name that’s easy to identify and manage. For each of the Engenius bot filters we start with the name “Spam crawler” and then list the url or other specifics of the filter. That way it’s easy to differentiate it from any other filters we may use.

- Select the “Custom” filter type and choose to “exclude certain traffic.” A dropdown menu for the “Filter Field” will appear.

- Choose the “Campaign Source” option and within the “Filter Pattern” box enter the URL of the bot traffic.

- Save your filter and you’re done.

If you’ve never filtered bots from your website before there will likely be a large number you need to exclude at first, but afterwards you should only have to look for new bots every few months.

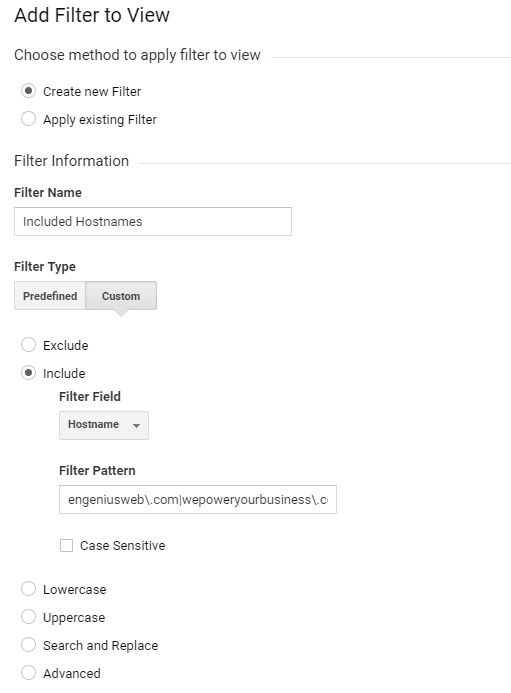

Filter Bot Traffic for Direct Sources:

- Create a list of every valid hostname you know of. For small to medium websites this will likely only include your main domain and some subdomains.

- Navigate to the Filters section of Analytics and “add a new filter.” This will be a custom filter. Choose to “only include certain sources.”

- From the “Filter Field” dropdown choose the “Hostname” option.

- In the “Filter Pattern” you’ll want to include the domain of your website with a backslash before the “.com” portion (“engeniusweb\.com”).

TIP: You don’t need to include subdomains, but if there are any other valid urls you’ll want to include those as well. Add them to the “Filter Pattern” with a bar blocking each distinct url. Here’s an example of what that might look like:

Engeniusweb\.com|wepoweryourbusiness\.com|validurl\.com

- Once you’ve added the proper “Filter Pattern” save your filter and you’re finished!

Bots are Here to Stay

Adding these filters doesn’t mean the bots aren’t on your site, it means their activity won’t be reflected when reviewing your analytics. We’ll use our own website as an example. During the month of April 2018, our unfiltered Analytics View says we received 2,308 sessions, but our filtered View says we received 1,681 sessions.

That’s a 27% difference, and if we only saw the unfiltered View we would think our marketing efforts are more successful than they are.

Whether or not we like it, bots are here to stay. Even the “good” Google spider bots still cause corruptions to your website analytics. The best defense is to filter out the bots, ensuring you get an accurate read on your website and marketing efforts.

We know this is a lot to take in; if you have more questions reach out to our care team.

Start the Conversation

Interested in learning more about how to understand and utilize website traffic?